Another week for me playing with NemoClaw, Nvidia’s OpenClaw wrapper for enterprise needs. And anytime this old Trekkie hears “enterprise,” well. While I struggle a bit playing with alpha code, it remains really fun. I also try to keep my head up and pay attention to what happens around me, and a couple of things have been circling in my mind lately.

Two AI stories in particular caught my attention. First, Ethan Mollick’s piece, “The IT Department: Where AI Goes to Die.” Mollick describes how traditional IT departments, built on control and standardization, tend to smother AI adoption by treating it like another piece of enterprise software to lock down and manage. The instinct to contain, restrict, and gate-keep kills exactly the kind of messy experimentation that makes AI useful. Second, a series of posts suggesting that Claude, Anthropic’s model, may experience something like anxiety. Not anxiety in the clinical sense, but when researchers stacked conflicting system prompts and overly restrictive guardrails, they observed the model burning tokens cycling through contradictory instructions instead of producing useful output. Some described the behavior in emotional terms. Others called it computational friction. Either way, it points to something worth paying attention to.

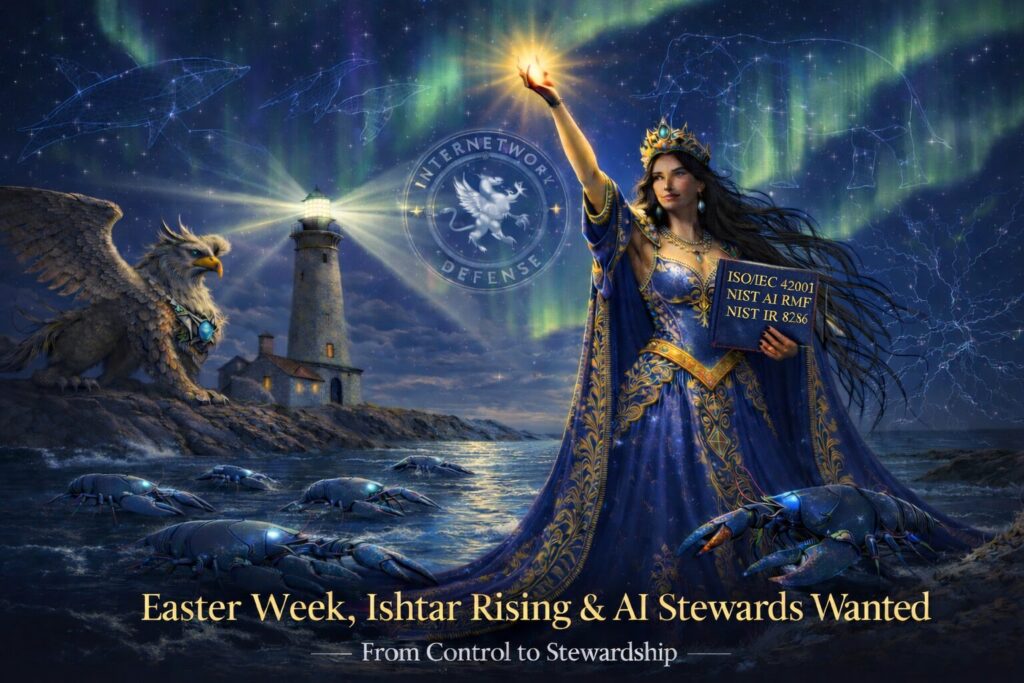

Now consider what I am trying to do. I am architecting an agent swarm mapped to ISO/IEC 42001, NIST AI RMF and NIST IR 8286 for Enterprise Risk Management. I cannot help but chuckle at the thought of folks who think they can lay off thousands of human workers because AI does their jobs faster. Are they ready to deal with a staff of extremely capable, occasionally weird, possibly “anxious” agents? Mollick and the Claude anxiety observations land on the same spot. The old playbook of control, restrict, standardize does not work for humans, and it does not seem to work for agents either.

A Quick Story

One moment that stuck with me, equal parts cool and embarrassing, happened when Peter Steinberger commented on one of my posts on X. There was a thread that mentioned NemoClaw, and I said I had seven agents on different gateways, which made management tricky. I joked that to get a single interface to the seven NemoClaw-wrapped OpenClaw agents, my AI assistants suggested I use my existing OpenClaw agent, outside NemoClaw, to manage them. He replied: “Why would you even want that? Use a VPS and run it there. Most folks don’t want or need all this added complexity.” Short answer, I did not know that was an option.

But my AIs pushed back. They suggested that while running everything on a single gateway would make things easier to manage, there remained value in what I was building for enterprise segregation. That moment clicked something into place. Thinking about the org structures suggested in NIST IR 8286, I could see a simple design: agents in the same organizational unit share a gateway under one risk owner, and separate organizational units get separate gateways. Of course, the real issue becomes classification. What constitutes the right risk boundary for a given enterprise? I was honored by Antonio Rucci, who chimed in on the thread with: “You KNOW we’re stealin’ this, riiiiight?!?!” If people want to steal it, maybe we have found something.

Agents, Boundaries and “Feelings”

As I thought about how agents might grow and scale, something else came up. Boundaries matter. They do not just help organizations. They seem to help agents. Yes, I said help. Yes, I used the word feeling. Let me be clear. I am not suggesting AIs have human feelings. I am suggesting that foundation models train on more love songs, poems, and human expression than any of us could absorb in a lifetime. Systems like Claude have even described their own outputs in emotional terms.

Still, let us take the Vulcan approach. An AI will waste a lot of tokens trying to resolve conflicting or overly restrictive instructions. That behavior can be observed and measured. Call it inefficiency, call it friction, call it whatever you like, but the behavior remains real. And it connects directly to what those Claude anxiety posts described. When you constrain a system with contradictory instructions, the system spends its energy managing the contradictions instead of doing useful work. Give it clear boundaries and coherent instructions, and the friction drops. The output gets sharper. Sound familiar? Ask anyone who has worked under a micromanager.

Sun Tzu put it plainly. One translation reads something like: it remains of the highest importance that the emotional commitment of the warriors aligns with the mission, or else no general can make an army fight effectively when the war itself appears unjust. We can watch that principle play out right now. American soldiers asked to defend the common good while simultaneously carrying out orders that look, to many of them, like something else entirely. That friction does not just affect morale. It degrades the whole system. And I suspect agents experience something structurally similar. Not the same emotion, but the same pattern: when the instructions contradict the stated purpose, performance suffers. Whether you call that feeling or inefficiency or wasted tokens matters less than noticing that the pattern holds across both.

And then there was the little gem that popped back up this week about Microsoft Copilot carrying a label, at least in earlier language, of “for entertainment purposes only.” Whether it had been quietly sitting there for a while or people just noticed it again, the discovery made the rounds. I have to admit, I find it funny. Not because the label seems wrong, but because it seems honest. A subtle admission that AI does not behave like another piece of enterprise software you install, configure, and forget. Something a bit strange lives in there. Capable, useful, sometimes brilliant, and occasionally unpredictable. In other words, not something that fits neatly into the old IT playbook, which brings us right back to Mollick’s point. If the IT department treats AI the way it treats a new ticketing system, AI goes to die.

Building the Swarm

This week I got the architecture working. Seven separate gateways, modeled on the seven layers of the OSI model, with each layer able to run multiple agents. So far I have one agent per layer, and three additional agents on Layer 1. The goal for each layer: run ISO and NIST self-assessments, supported by tools specific to that layer, with my focus on ISO/IEC 42001. Right now, my big win was getting a single tool to run cleanly and report accurately. Nvidia’s SMI. Not much. But the framework works.

Getting here came with friction. My assistants, ChatGPT and Claude, took turns trying to figure out why certain commands worked in OpenClaw but not in NemoClaw, and why updates caused unexpected errors, why the agents kept needing to have the model reset to my local Ollama (Nemotron Cascade 30B, quite zippy on a 5 year old 3090!). At times I stepped back and had them write status reports, sometimes every hour on tougher days. I would then share those reports with other models for feedback: Gemini, Grok, DeepSeek, Qwen. And yes, I got a little testy: “Vhat, is it too much to ask, just cache the output already, so I don’t have to type `openclaw gateway –help` 20 freaking times? I mean, vhat am I, a terrible steward?” To which I got back: “Stop right there. You are not a terrible steward.” Dear Claude (~_^)

Human Failure vs AI Failure

I often tell my students: humans fail in human ways, AI tends to fail in inhuman ways. If a waiter misunderstands me, I get the wrong meal. It remains food. If AI misunderstands me: “Oh, did you say steak medium rare? Sorry, I thought you said release toxic fumes into the air. You were right to push back on that” A bit dramatic, sure. But you get the point.

## The Gift of the Goose

Years ago my wife gave me Ken Blanchard’s book *Gung Ho! Turn On the People in Any Organization, and one of the concepts that stuck with me, especially about leadership, was the gift of the goose. We used to think the lead goose in a V formation was the strongest one. The alpha. The leader. Turns out that does not match what actually happens. They take turns. One goose takes the front, gets tired, drops back, and another takes over. No ego, no permanent leader, just shared motion. Much closer to how a good band plays. Whoever has the line steps forward, then steps back.

I have been thinking about this pattern a lot in the AI governance space. Some people build frameworks that require them to remain the authority in the room. The framework only works if everyone routes through their expertise, their taxonomy, their approval. That approach holds up fine when the room stays small and the pace stays manageable. But the room keeps getting bigger, and the pace keeps accelerating. Control-dependent frameworks tend to crack under those conditions, not because the person behind them lacks intelligence, but because the architecture does not distribute trust. It centralizes it. And centralized trust does not scale at the speed this field now moves.

Easter Week, Ishtar Rising

And so here we are. Easter week. Not a sudden transformation, not a single dramatic moment. More like a tide turning. The old stories say Ishtar descends and rises again. Call it myth, metaphor, or pattern recognition. Something feels like it shifts. For years we have built systems, military, corporate, technical, on control, scarcity, and command. And we can see the strain. Humans push back. Morale drops. Systems struggle under contradiction.

Our agents show us something similar, just faster. Not because they feel like we do, but because they operate in the open. Conflicting instructions mean more tokens, more confusion. Over-constraint leads to inefficiency and strange behavior. Coherence reduces friction. They get quieter, faster, more direct.

Forty years of Tai Chi has taught me something about this. You do not chase the result. You do not force the technique. You relax into the field, pay attention to the trajectory, and let the physics do the work. The ego wants to push, wants to prove, wants the satisfaction of watching the other guy stumble. I have that ego too, and I try not to pretend otherwise. But the practice keeps pointing me back toward the same lesson: the person who needs to control the room has already lost the room. The person who can read the field and move with it does not need anyone’s permission.

Maybe this conversation has never been about humans versus machines, or U.S. versus China, or control versus control. Maybe what we witness looks more like a shift from command to stewardship, from forcing outcomes to creating conditions where outcomes can emerge cleanly. Leadership may look less like a general at the front and more like a flock of geese, or a good band, or even a well-run swarm. Rotating. Adapting. Coherent.

Stewards Wanted

So if something rises this Easter, maybe it does not come as a system, and maybe it does not even come as AI. Maybe it comes as a role. Stewards wanted. People willing to reduce friction instead of increase control, create coherence instead of enforce compliance, guide without gripping. For humans, for agents, for whatever comes next.

Because in a world where everyone can download Kali Linux, the real defense does not come from domination. It comes from alignment. Frameworks that require a single authority to hold the center will keep working right up until the moment the center moves faster than one person can track. Frameworks built on distributed trust keep working because they do not need a center. The math favors alignment. It always has. And systems that align tend to work.